When quakes hit, it’s not just the ground that shakes. How much the buildings above shimmy and buckle determines the number of people who are injured or die.

What’s the best way to determine that risk? Most of today’s models are too static to help solve the problem, but OSM is playing a key role in a new dynamic model.

“Our dream is to know every building. And what we want to know is the exact location, size, the number of people who live in it, building materials, its vulnerability and, potentially, the value for loss compensation,” says Danijel Schorlemmer of the Global Dynamic Exposure and OpenBuildingMap projects. “The challenge is to create a dynamic model in high resolution on a building-by-building scale.”

Schorlemmer’s career has taken him from the fault lines of Los Angeles to Tokyo working on earthquake prediction and risk assessment models. Now a senior scientist for the Global Earthquake Model (GEM) at the German Research Centre for Geosciences in Potsdam, he shared details in a brief breakout session at State of the Map in Heidelberg. (You can catch him talking more in-depth about it at the upcoming State of the Map Southeast Europe.)

The project uses crowdsourced data from OpenStreetMap by processing updates from OSM every 60 seconds. Researchers analyze the changes, looking for any edit that affects buildings. These changes include as adding or removing the building or inserting details about building materials (wood frame, concrete) or number of floors. From there, they assess as many exposure indicators as possible.

The idea was to address a key limitation in exposure models, mainly that they’re low in either resolution or coverage due to the lack of data and are, by nature, static. OSM — and the volunteers who add information for about 100,000 buildings every day — help solve the problem. Risk is the combination of the natural hazard itself, amplification of the shaking plus the exposure of vulnerability — all “human assets, whatever we as a society are interested in,” he adds.

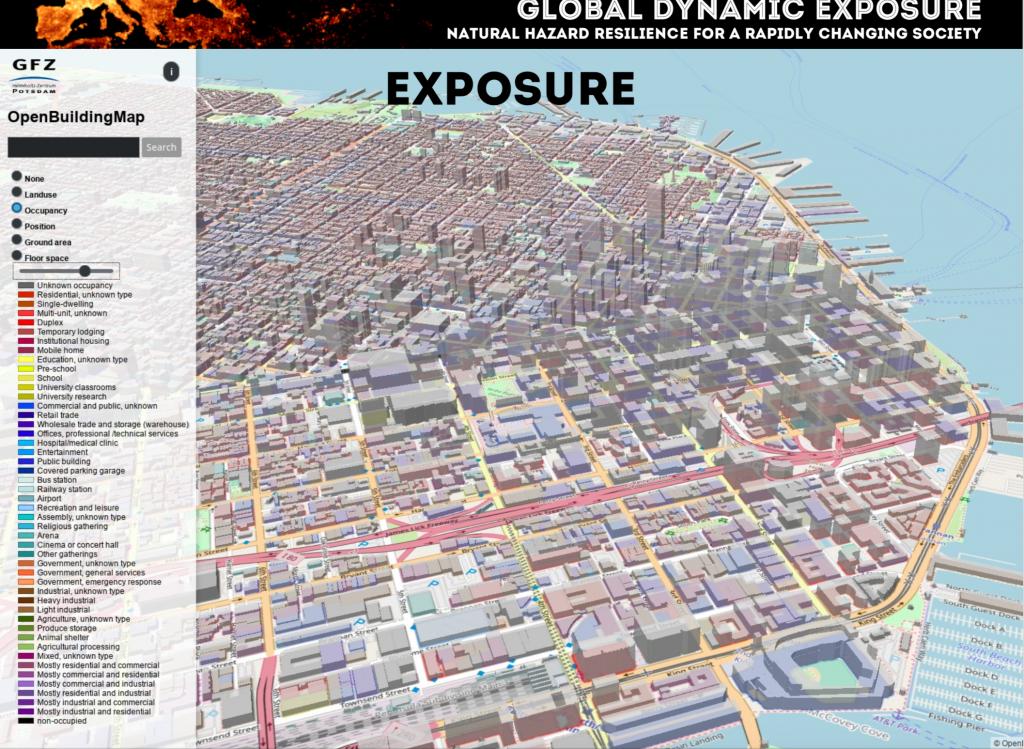

Schorlemmer offers an example of what earthquake exposure risk should look like with maps of seismically-challenged San Francisco.

The first is an overview with OpenBuildingMap — which you can check out online — showing occupancy types for the city. There are about 50 of them ranging from single-dwelling to concert hall to animal shelter.

Then there’s a building classification map that illustrates the predominance of wood-frame structures. Building on these, the next two detail what the damage might look like, after a 6.9 temblor such as 1989’s Loma Prieta.

Another map shows probability for every building to show moderate damage. The more risky areas are in downtown and in the Marina neighborhood, heavily damaged in previous quakes. It matches somewhat the the shake match shown previously, but if you look in detail, Schorlemmer says, it’s not that simple.

“A classic exposure model for the city would take into account the percentage of wood-frame homes, steel structures etc. and then crunch the numbers for an aggregated loss estimate. We do it on a building-by-building scale,” says Schorlemmer. How much does it matter? Just look at a few earthquake photos and you’ll find plenty structures collapsed after quakes where their neighbors stand tall.

Accurately predicting risk

influences right-sized disaster response — emergency management, rescue, shelter and boost overall awareness. That’s why governments around the world in earthquake-prone areas have put money on the table for prediction models. Here’s hoping that the power of open source and open data will produce the most accurate one.

5 thoughts on “Harnessing the power of OpenStreetMap for earthquake risk modeling”